Partial Information Attacks on Real-world AI

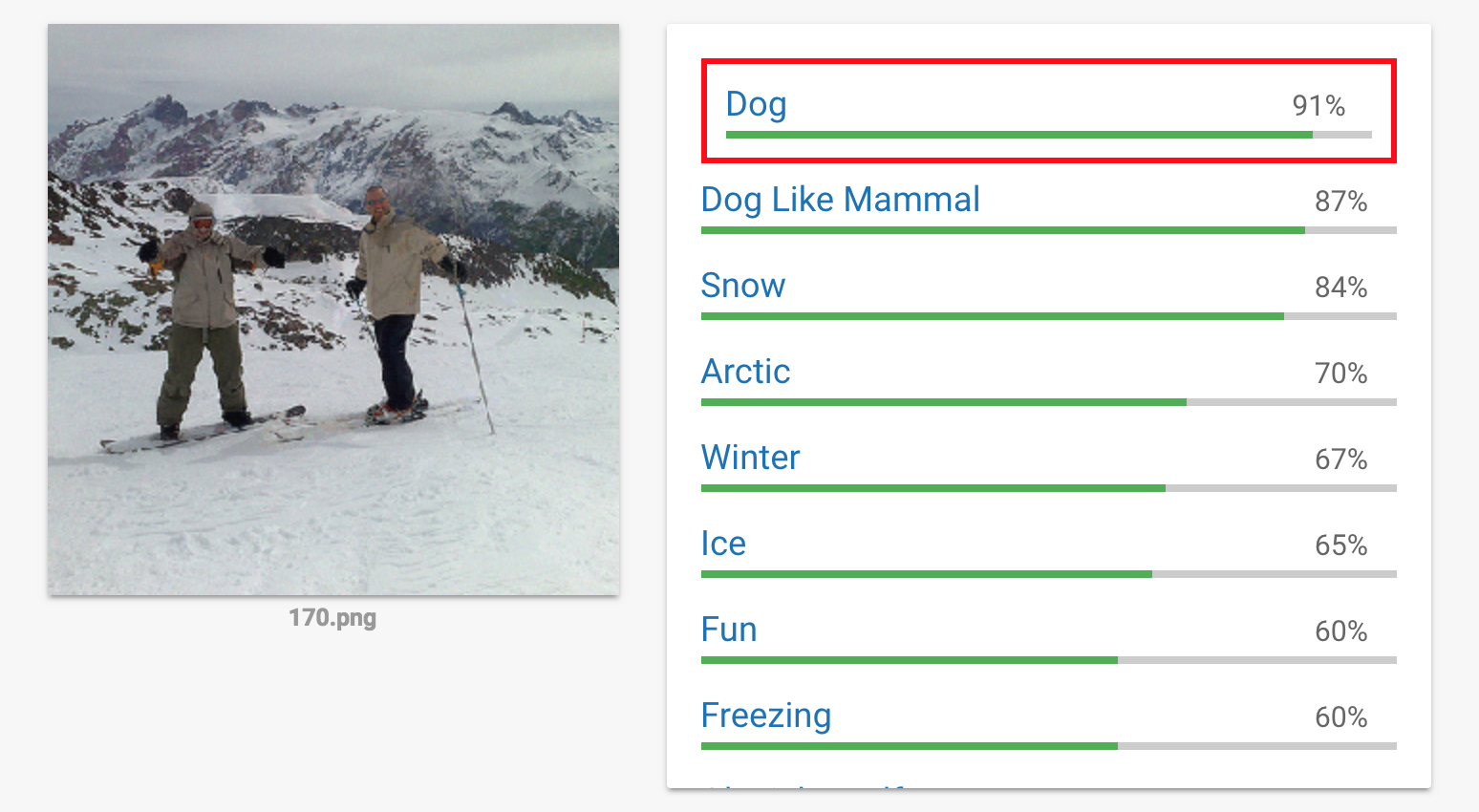

20 Dec 2017 · 2 min read — shared on Hacker News, Lobsters, Reddit, TwitterWe’ve developed a query-efficient approach for finding adversarial examples for black-box machine learning classifiers. We can even produce adversarial examples in the partial information black-box setting, where the attacker only gets access to “scores” for a small number of likely classes, as is the case with commercial services such as Google Cloud Vision (GCV).

Whereas many methods for adversarial examples rely on the ability to differentiate through the targeted neural network, including our previous work on generating 3D physical-world adversarial examples, this “white-box” assumption is rarely applicable to machine learning systems deployed in the wild. More commonly, attacks must work in the “black-box” setting, where they can only perform a limited number of queries to the network with restricted knowledge of the internals of the system.

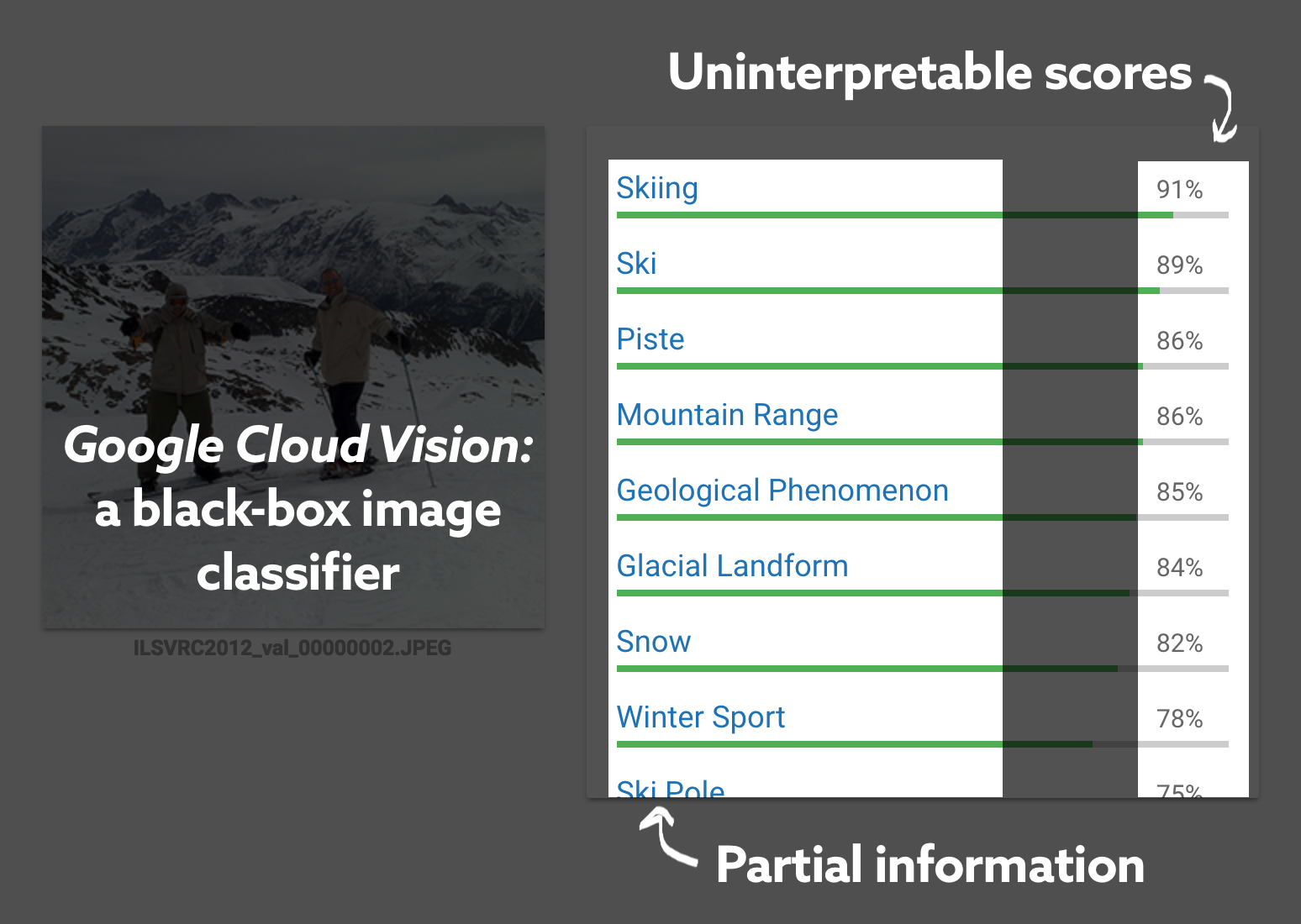

Furthermore, commercial systems like GCV often only output a limited and variable number of top classes with uninterpretable “scores”, making it difficult to perform targeted adversarial attacks.

Our approach works by starting with an image from the target class and gradually turning it into the desired adversarial image while maintaining the target class in the output. Using this method, we successfully demonstrate what is to our knowledge the first targeted attack against a commercial classifier in the partial information setting.

This method is enabled by a highly query-efficient method for black-box adversarial examples using natural evolution strategies (NES), introduced by Wierstra et al. We show that NES is more effective and two to three orders of magnitude more query-efficient than previous approaches to black-box adversarial examples, such as substitute networks and coordinate-wise gradient estimation. Using NES as a query-efficient black-box gradient estimator, we are able to perform attacks like the partial-information attack demonstrated above that were previously intractable.

You can download our original and adversarial images and try it yourself with the Google Cloud Vision demo.

To learn more about our method and results, see https://www.labsix.org/papers/#blackbox.

Acknowledgements

Special thanks to Nat Friedman and Daniel Gross.